Storage¶

Storage blocks are not recommended

Storage blocks are part of the legacy block-based deployment model. Instead, using serve or runner-based Python creation methods or workers and work pools with prefect deploy via the CLI are the recommended options for creating a deployment.

Flow code storage can be specified in the Python file with serve or runner-based Python creation methods; alternatively, with the work pools and workers style of flow deployment, you can specify flow code storage during the interactive prefect deploy CLI experience and in its resulting prefect.yaml file.

Storage lets you configure how flow code for deployments is persisted and retrieved by Prefect workers (or legacy agents). Anytime you build a block-based deployment, a storage block is used to upload the entire directory containing your workflow code (along with supporting files) to its configured location. This helps ensure portability of your relative imports, configuration files, and more. Note that your environment dependencies (for example, external Python packages) still need to be managed separately.

If no storage is explicitly configured, Prefect will use LocalFileSystem storage by default. Local storage works fine for many local flow run scenarios, especially when testing and getting started. However, due to the inherent lack of portability, many use cases are better served by using remote storage such as S3 or Google Cloud Storage.

Prefect supports creating multiple storage configurations and switching between storage as needed.

Storage uses blocks

Blocks are the Prefect technology underlying storage, and enables you to do so much more.

In addition to creating storage blocks via the Prefect CLI, you can now create storage blocks and other kinds of block configuration objects via the Prefect UI and Prefect Cloud.

Configuring storage for a deployment¶

When building a deployment for a workflow, you have two options for configuring workflow storage:

- Use the default local storage

- Preconfigure a storage block to use

Using the default¶

Anytime you call prefect deployment build without providing the --storage-block flag, a default LocalFileSystem block will be used. Note that this block will always use your present working directory as its basepath (which is usually desirable). You can see the block's settings by inspecting the deployment.yaml file that Prefect creates after calling prefect deployment build.

While you generally can't run a deployment stored on a local file system on other machines, any agent running on the same machine will be able to successfully run your deployment.

Supported storage blocks¶

Current options for deployment storage blocks include:

| Storage | Description | Required Library |

|---|---|---|

| Local File System | Store code in a run's local file system. | |

| Remote File System | Store code in a any filesystem supported by fsspec. |

|

| AWS S3 Storage | Store code in an AWS S3 bucket. | s3fs |

| Azure Storage | Store code in Azure Datalake and Azure Blob Storage. | adlfs |

| GitHub Storage | Store code in a GitHub repository. | |

| Google Cloud Storage | Store code in a Google Cloud Platform (GCP) Cloud Storage bucket. | gcsfs |

| SMB | Store code in SMB shared network storage. | smbprotocol |

| GitLab Repository | Store code in a GitLab repository. | prefect-gitlab |

| Bitbucket Repository | Store code in a Bitbucket repository. | prefect-bitbucket |

Accessing files may require storage filesystem libraries

Note that the appropriate filesystem library supporting the storage location must be installed prior to building a deployment with a storage block or accessing the storage location from flow scripts.

For example, the AWS S3 Storage block requires the s3fs library.

See Filesystem package dependencies for more information about configuring filesystem libraries in your execution environment.

Configuring a block¶

You can create these blocks either via the UI or via Python.

You can create, edit, and manage storage blocks in the Prefect UI and Prefect Cloud. On a Prefect server, blocks are created in the server's database. On Prefect Cloud, blocks are created on a workspace.

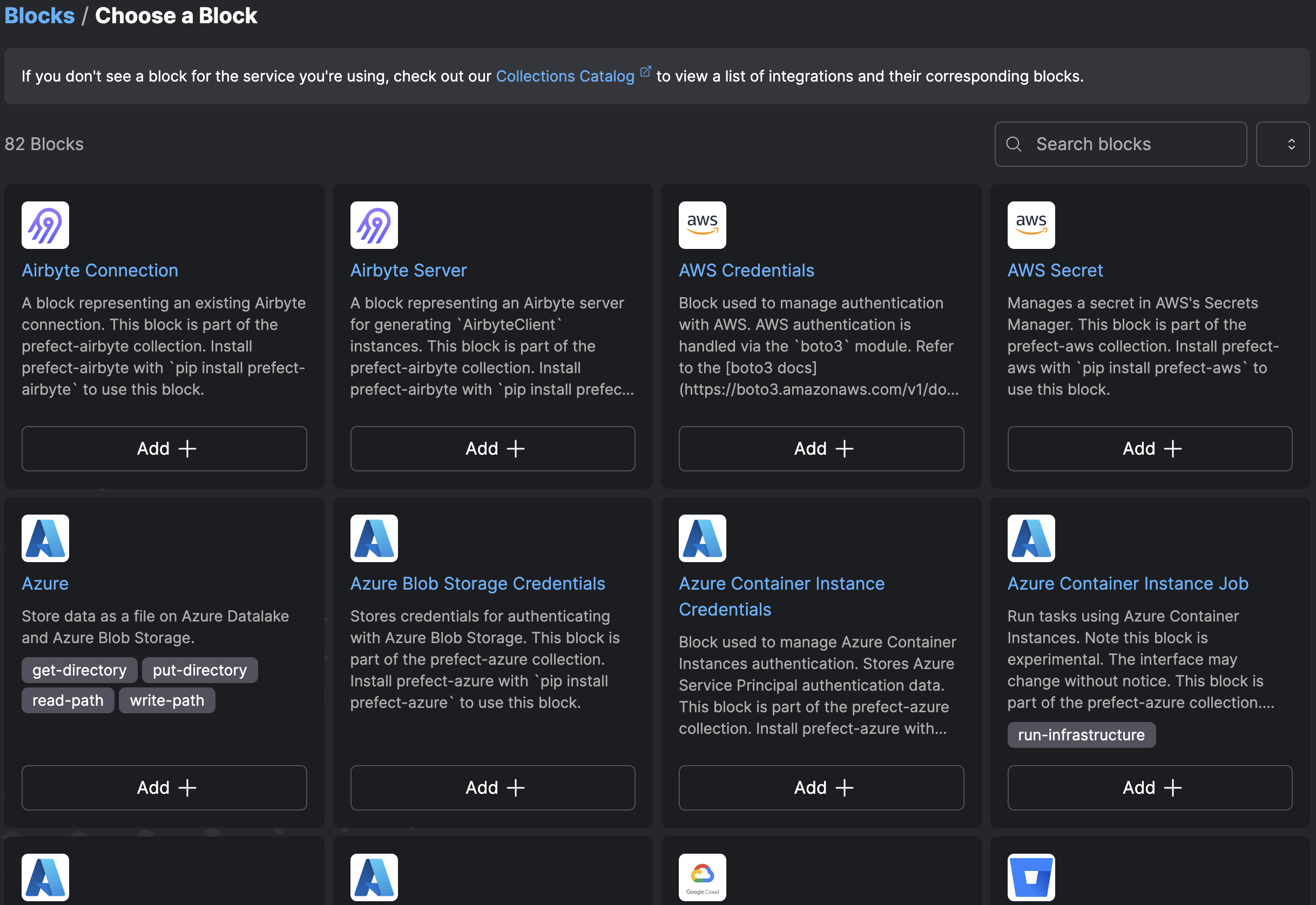

To create a new block, select the + button. Prefect displays a library of block types you can configure to create blocks to be used by your flows.

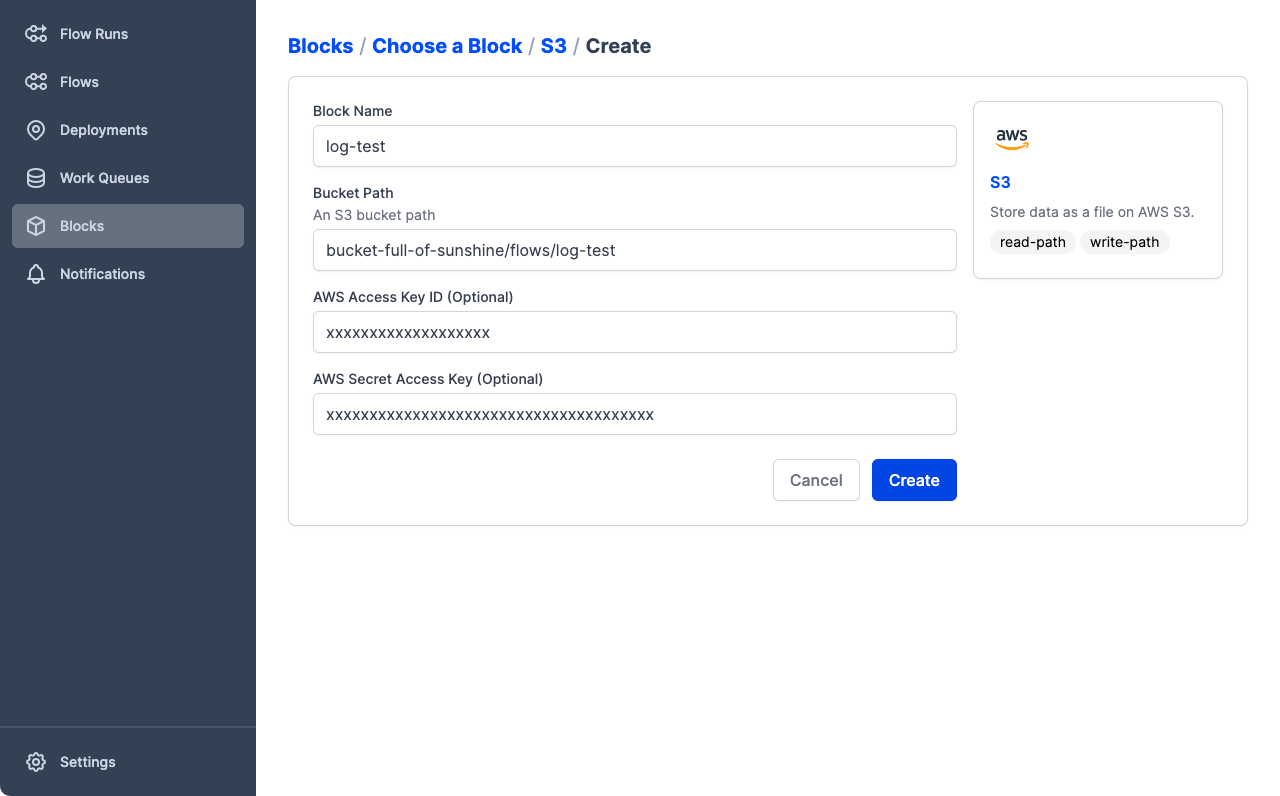

Select Add + to configure a new storage block based on a specific block type. Prefect displays a Create page that enables specifying storage settings.

You can also create blocks using the Prefect Python API:

from prefect.filesystems import S3

block = S3(bucket_path="my-bucket/a-sub-directory",

aws_access_key_id="foo",

aws_secret_access_key="bar"

)

block.save("example-block")

This block configuration is now available to be used by anyone with appropriate access to your Prefect API. We can use this block to build a deployment by passing its slug to the prefect deployment build command. The storage block slug is formatted as block-type/block-name. In this case, s3/example-block for an AWS S3 Bucket block named example-block. See block identifiers for details.

prefect deployment build ./flows/my_flow.py:my_flow --name "Example Deployment" --storage-block s3/example-block

This command will upload the contents of your flow's directory to the designated storage location, then the full deployment specification will be persisted to a newly created deployment.yaml file. For more information, see Deployments.